Timeouts: 2015/16 PlusLiga

Ben Raymond (ben@untan.gl), Mark Lebedew (@homeonthecourt)

This page gives the detailed breakdown of the analyses discussed in this post on Mark’s blog.

1 Data and software

All analyses have been conducted in R. An R package for reading and analyzing DataVolley scouting files is in development: see https://github.com/raymondben/datavolley.

The data come from a number of matches from the 2015/16 PlusLiga (Polish League). A brief summary is given below. There are more matches for some teams than others, but all teams are represented by at least 17 matches.

Number of matches: 143

Match dates: 2015-10-30 to 2016-04-11

Number of individual players: 204

Number of data rows: 199890

Number of individual points: 24080

Number of serves: 24077

Number of timeouts: 1463

Number of technical timeouts: 1016| Team | Played | Won | Win rate | Sets played | Sets won | Set win rate | Points for | Points against | Points ratio |

|---|---|---|---|---|---|---|---|---|---|

| Asseco Resovia Rzeszów | 23 | 15 | 0.652 | 89 | 57 | 0.640 | 2090 | 1872 | 1.116 |

| AZS Częstochowa | 20 | 5 | 0.250 | 80 | 28 | 0.350 | 1649 | 1805 | 0.914 |

| AZS Politechnika Warszawska | 22 | 11 | 0.500 | 83 | 40 | 0.482 | 1845 | 1880 | 0.981 |

| BBTS Bielsko-Biała | 18 | 6 | 0.333 | 69 | 25 | 0.362 | 1456 | 1580 | 0.922 |

| Cerrad Czarni Radom | 21 | 13 | 0.619 | 77 | 45 | 0.584 | 1784 | 1663 | 1.073 |

| Cuprum Lubin | 17 | 9 | 0.529 | 66 | 37 | 0.561 | 1511 | 1462 | 1.034 |

| Effector Kielce | 23 | 5 | 0.217 | 89 | 28 | 0.315 | 1833 | 2084 | 0.880 |

| Indykpol AZS Olsztyn | 17 | 9 | 0.529 | 65 | 34 | 0.523 | 1428 | 1436 | 0.994 |

| Jastrzębski Węgiel | 26 | 13 | 0.500 | 105 | 53 | 0.505 | 2319 | 2307 | 1.005 |

| LOTOS Trefl Gdańsk | 21 | 14 | 0.667 | 82 | 46 | 0.561 | 1842 | 1769 | 1.041 |

| Łuczniczka Bydgoszcz | 18 | 5 | 0.278 | 62 | 19 | 0.306 | 1363 | 1495 | 0.912 |

| MKS Będzin | 17 | 3 | 0.176 | 62 | 17 | 0.274 | 1263 | 1479 | 0.854 |

| PGE Skra Bełchatów | 22 | 17 | 0.773 | 85 | 55 | 0.647 | 1964 | 1813 | 1.083 |

| ZAKSA Kędzierzyn-Koźle | 21 | 18 | 0.857 | 72 | 59 | 0.819 | 1732 | 1434 | 1.208 |

2 Timeouts

The motivation for this analysis is to examine the effects of timeouts in volleyball matches, particularly with respect to serve errors and sideouts.

2.1 General timeout patterns

We start with a general overview of timeout calling patterns. How often do different teams call timeouts?

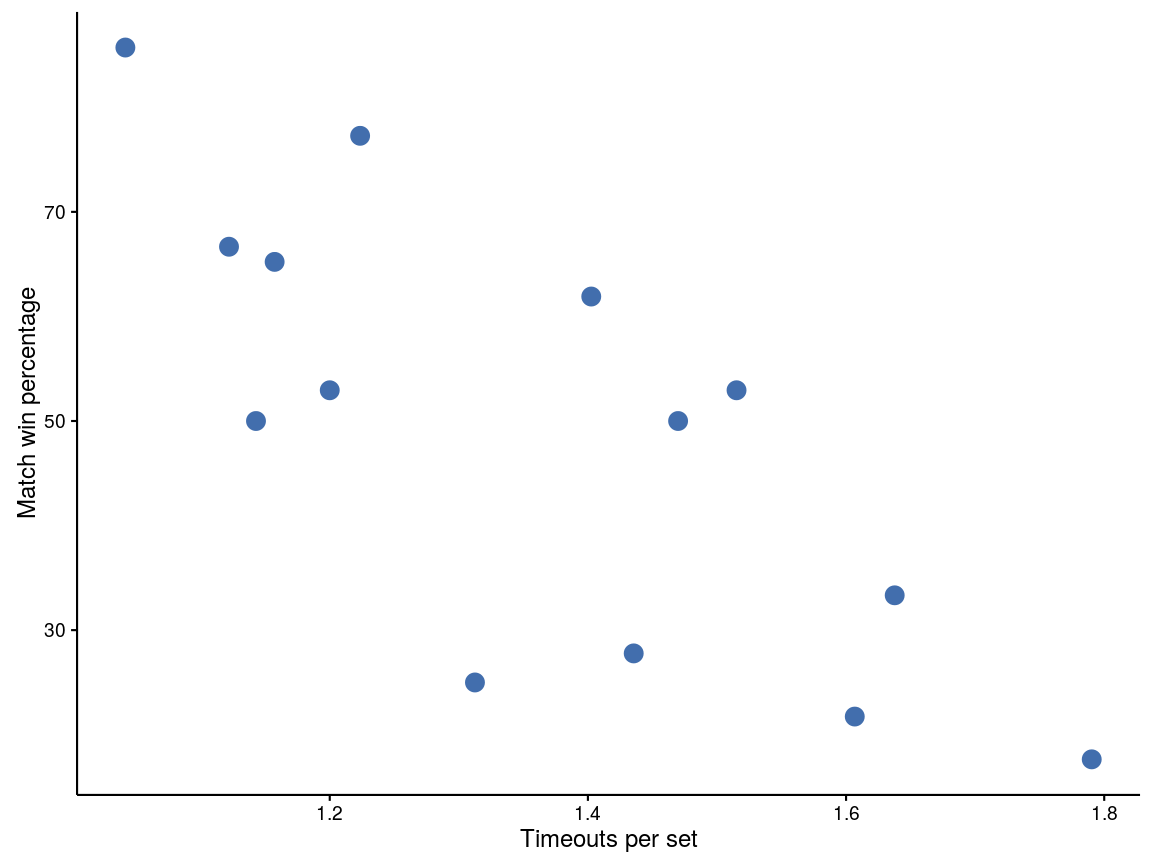

Broadly speaking, teams that have a higher win rate (percentage of matches won) call less timeouts. A plot of this relationship is shown below:

Figure 1: Timeouts per set plotted against match win percentage. Each point represents one team

Timeouts are almost universally called by the team that is not currently serving:

18 timeouts (of 1463 total) called by serving teamNote: we believe that many of these are mistakes in the scouting files, so the actual number may well be lower than this.

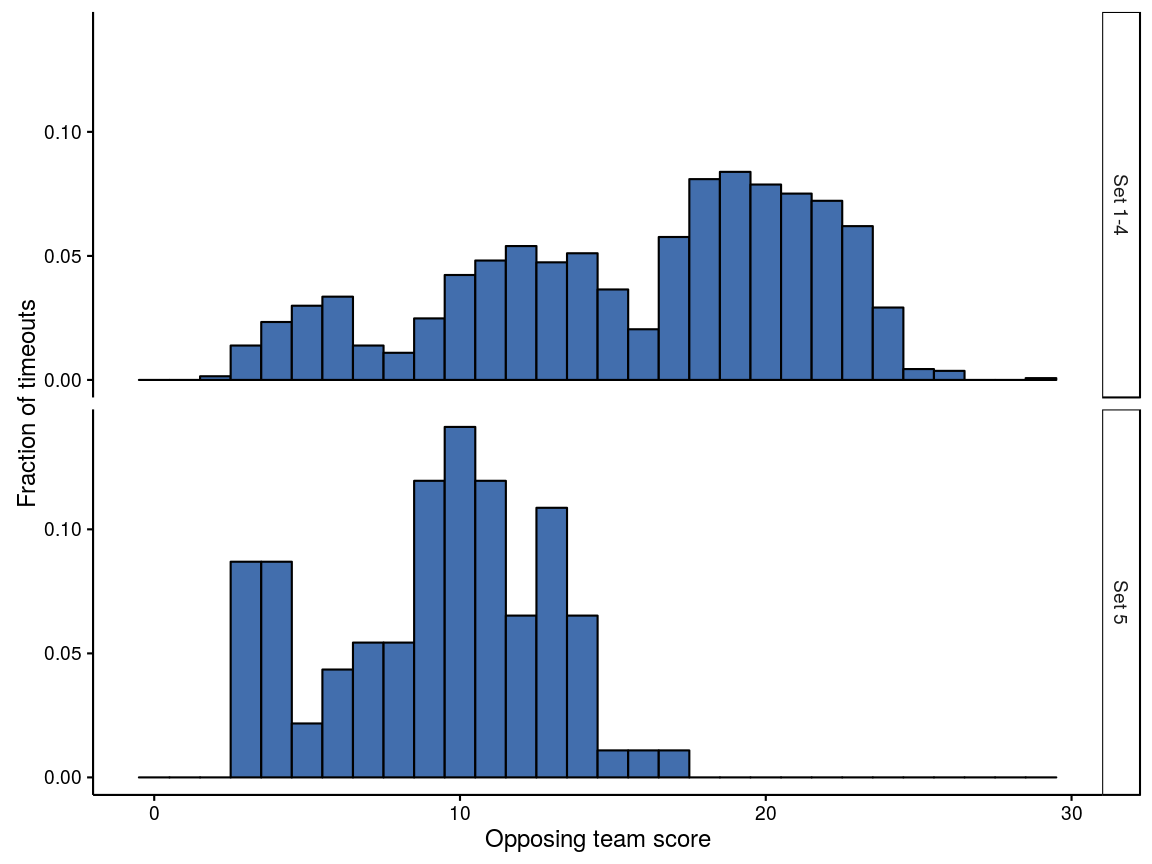

When are timeouts called? Below is a histogram of the opposing team’s score (at the time that each timeout was called). Sets 1–4 are plotted separately from set 5, for obvious reasons.

Figure 2: Histograms of timeouts against opposing team’s score

For sets 1–4, there are technical timeouts at 8 and 16 points, which correspond to the dips in the upper panel. Coaches will typically avoid using a team timeout when a technical timeout is imminent. These dips aside, there is a general increase in the number of timeouts called as the set progresses, peaking around 20 points. Set 5 (during which there are no technical timeouts) shows a peak around 3–4 points, a second around 10 points, and possibly a third around 13 points.

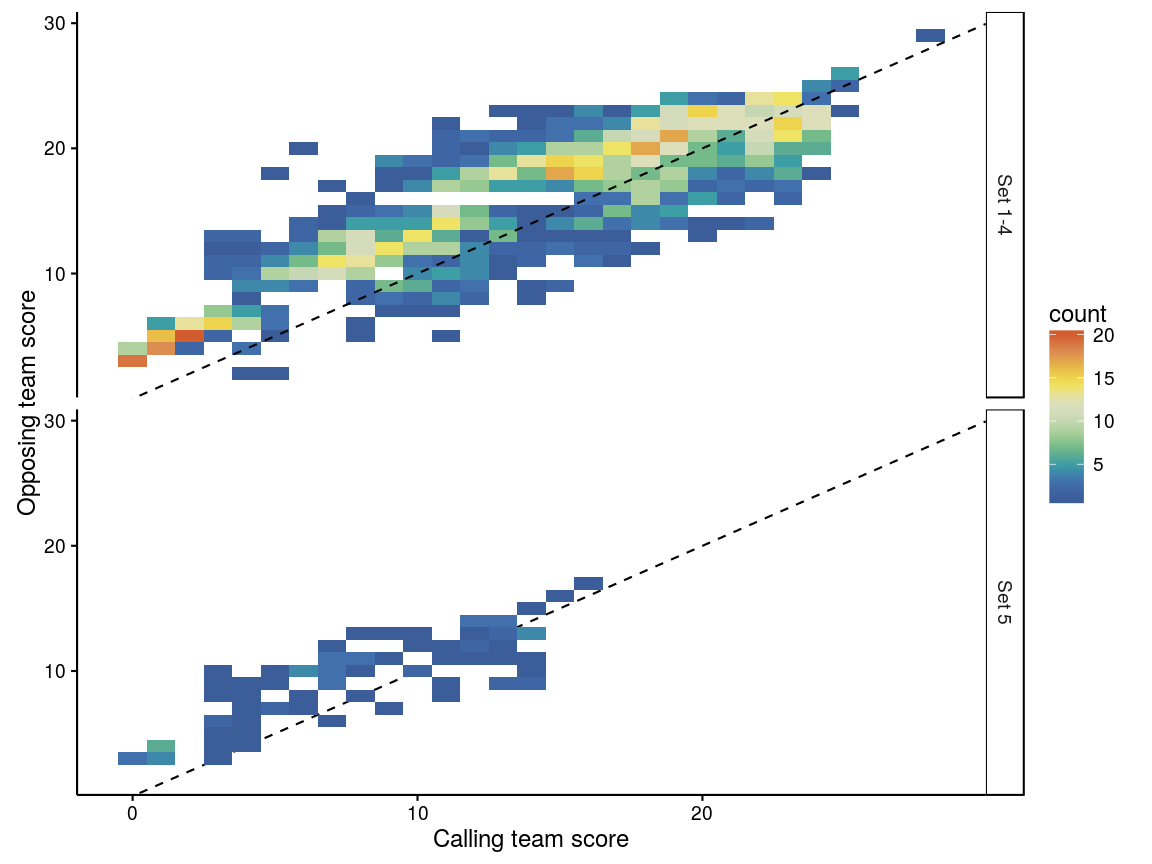

This can be plotted with both the calling team’s score and the opposing team’s score. The colours in the plot below show the number of timeouts called for each combination of calling team’s score and opposing team’s score:

Figure 3: Heatmaps of timeouts against calling and opposing team scores

There is a particular hotspot when the opposing team’s score is around 5 and the calling team’s score is lower — so the opposition has jumped out to a quick start. It is clear that most timeouts generally lie above the 1:1 dashed line, meaning that the opposing team is ahead of the calling team. There are also a number of timeouts called when the calling team is ahead of the opposition, mostly towards the end of the set: possibly these might correspond to situations where the opposing team has scored a run of points and is catching up to the calling team, but this wasn’t looked at further here.

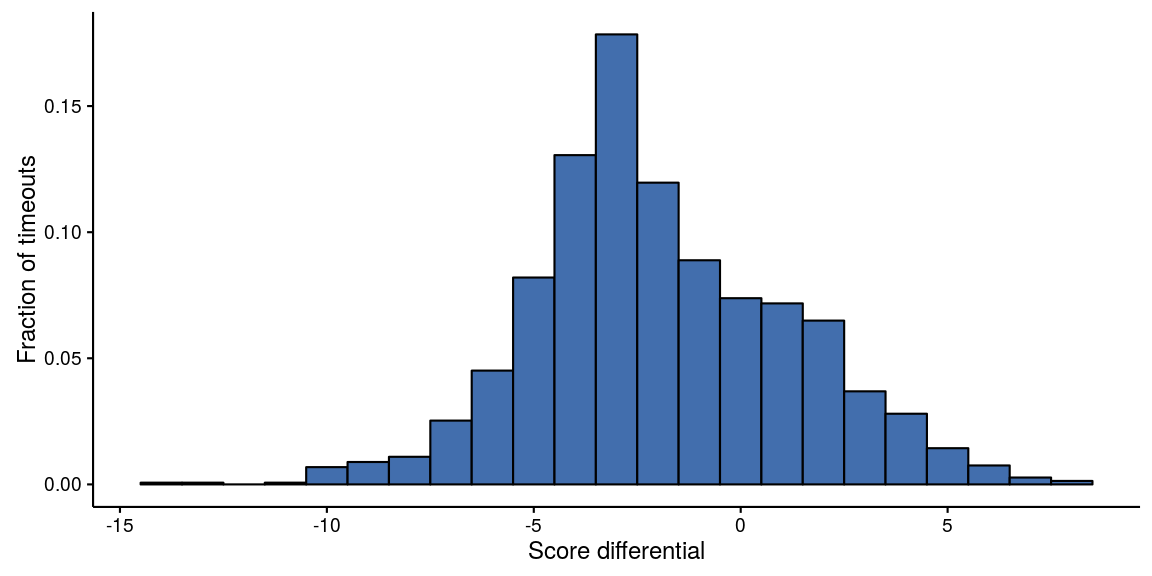

A simplified version of the previous graph is below, showing only the score differential (calling team’s score minus the opposing team’s score) when each timeout was called:

Figure 4: Histogram of timeout frequency as a function of score differential

Only a small proportion (about one quarter) of timeouts were called by a team that was leading at the time.

None of the above is particularly surprising, but it serves to confirm that timeouts are generally called by the receiving team, at times when some sort of intervention might be expected to be helpful to the outcome.

2.2 Effect of timeouts on sideouts and serve effort

The following indicators of serve success and effort are considered:

- whether the serve was a jump serve or not. The data in fact provides “serve type”, which is one of jump, jump-float, float, or unknown serve type. However, the latter two categories make up a vanishingly small fraction of overall serves, and so “serve type” can be adequately treated by converting it to a binary indicator of whether the serve was a jump serve or not

- serve error rate (the proportion of serves where a service error was made)

- ace rate (the proportion of serves that were aces)

- perfect pass rate (the proportion of receptions rated as a “perfect pass”).

The following indicators of the performance of the receiving team are calculated:

- sideout rate. Sideouts are expressed from the perspective of the receiving team (i.e. a sideout is a reception where the receiving team wins the point)

- earned sideout rate (sideout rate excluding service errors)

- first ball sideout rate (proportion of receptions where the first attack by the receiving team was successful)

Indicators analogous to the sideout indicators, but expressed from the perspective of the serving team, are also calculated. These are referred to as:

- serve loss rate (the proportion of serves where the serving team lost the point)

- earned serve loss rate (serve loss rate excluding service errors)

- first ball serve loss rate (proportion of serves where the first attack by the receiving team was successful).

These indicators will be evaluated for each of the following situations:

- the first serve of a set

- the first serve following a technical timeout

- the first serve following a normal timeout

- all other serves.

Statistical tests have been conducted to evaluate whether a given indicator varies across the different situations. What does a significant result mean in this context? We are assuming that our dataset is a sample that is representative of some larger volleyball universe. The significance (p-value) of a test performed on our data indicates the degree to which we expect the result in question to hold true in the larger universe.

The statistical models used here are generally binomial mixed-effects models, in which the response is the indicator in question, and a random effect by individual player is included. A “binomial” model simply means that the response can take one of two values (e.g. if we are examining serve errors, then a given serve was either an error or it was not). The random effect allows individual players to vary in their performance. Including this random term will also account for the fact that some players might perform the skill in question more often than others across the various serve categories.

In general, we test by fitting two models for each indicator. The first model has a single fixed predictor term (the serve category). The second has no predictor terms — that is, it assumes that the indicator does not vary with serve category. We then compare the fits of the two models. If the first model is a statistically better fit to the data (p<0.05), then it supports the idea that the indicator does indeed vary with serve category.

2.2.1 Results

Below is a tabulation of the number of individual players associated with each of the serve categories, and the average number of serves that each player made in that category.

| Serve category | Number of unique servers | Average number of serves per server |

|---|---|---|

| General play | 176 | 119.648 |

| First of set | 105 | 5.171 |

| Followed timeout | 153 | 9.542 |

| Followed technical timeout | 147 | 6.912 |

Notice that the “first serve of the set” category represents a much more limited number of players than the other categories. This is of course because the starting rotation of each team is relatively consistent from set to set. The number of players associated with serves after technical timeouts is next smallest, because technical timeouts occur at fixed scores (first team to 8 and 16 points) thus restricting the possible rotations that can occur at these times.

For each indicator a brief summary of the data is shown, along with “raw” and model estimates of rates. The raw rate is calculated directly from the data (e.g. number of errors divided by number of serves), whereas the model estimate is the same rate but adjusted by the random effect in the model for the individual players involved.

2.2.1.1 Serve error rate

The proportion of serves where a service error was made.

| Serve category | Serves | Errors | Raw serve error rate from data | Serve error rate model estimate |

|---|---|---|---|---|

| General play | 21058 | 3523 | 0.167 | 0.163 |

| First of set | 543 | 62 | 0.114 | 0.118 |

| Followed timeout | 1460 | 216 | 0.148 | 0.139 |

| Followed technical timeout | 1016 | 126 | 0.124 | 0.122 |

Is there an overall difference in the service error rate in the various serve categories?

Significance of the serve category term: p<0.001

Thus, the serve error rate during general play appears to be higher than in other situations.

2.2.1.2 Ace rate

The proportion of serves that were aces.

| Serve category | Serves | Aces | Raw ace rate from data | Ace rate model estimate |

|---|---|---|---|---|

| General play | 21058 | 1126 | 0.053 | 0.049 |

| First of set | 543 | 22 | 0.041 | 0.038 |

| Followed timeout | 1460 | 94 | 0.064 | 0.056 |

| Followed technical timeout | 1016 | 51 | 0.050 | 0.045 |

Significance of the serve category term: p=0.295

Nothing consistent here, and the result is not significant.

2.2.1.3 Perfect pass rate

The proportion of receptions that were rated as a perfect pass.

| Serve category | Receptions | Perfect passes | Raw perfect pass rate from data | Perfect pass rate model estimate |

|---|---|---|---|---|

| General play | 17535 | 3070 | 0.175 | 0.166 |

| First of set | 481 | 98 | 0.204 | 0.197 |

| Followed timeout | 1244 | 243 | 0.195 | 0.192 |

| Followed technical timeout | 890 | 167 | 0.188 | 0.178 |

Significance of the serve category term: p=0.037

The model in this case has an additional random term for the serving player (that is, it tries to account for idiosyncrasies in both the serving player’s serving ability as well as the receiving player’s passing ability).

Perfect passes appear to be less frequent during general play than other serve categories, particularly after timeouts and the first serve of a set.

2.2.1.4 Sideout rate

For sideout indicators, the result is modelled in the same way as before (binomial mixed model) but with a random effect on team rather than individual player (because the sideout rate most likely depends more heavily on the overall team, rather than just the player who is serving).

| Serve category | Serves | Sideouts | Raw sideout rate from data | Sideout rate model estimate |

|---|---|---|---|---|

| General play | 21058 | 14016 | 0.666 | 0.667 |

| First of set | 543 | 369 | 0.680 | 0.680 |

| Followed timeout | 1460 | 975 | 0.668 | 0.672 |

| Followed technical timeout | 1016 | 680 | 0.669 | 0.677 |

Significance of the serve category term: p=0.799

There is very little evidence for differences in sideout rate across the serve categories.

2.2.1.5 Earned sideout rate

Sideout and serve loss rates excluding service errors (i.e. where the receiving team “earned” the sideout).

| Serve category | Receptions | Earned sideouts | Raw earned sideout rate from data | Earned sideout rate model estimate |

|---|---|---|---|---|

| General play | 17535 | 10493 | 0.598 | 0.600 |

| First of set | 481 | 307 | 0.638 | 0.639 |

| Followed timeout | 1244 | 759 | 0.610 | 0.616 |

| Followed technical timeout | 890 | 554 | 0.622 | 0.630 |

Significance of the serve category term: p=0.073

The differences are larger than with standard sideout rate, but are still relatively small and the model that includes serve_category is not a significantly better fit to the data than the model without it. Nevertheless, earned sideout rates might be marginally lower in general play than the other three categories. This is consistent with the higher serve error rate observed during general play than the other three categories.

2.2.1.6 First ball sideout rate

Proportion of receptions where the first attack by the receiving team was successful (service errors are excluded).

| Serve category | Receptions | First ball sideouts | Raw first ball sideout rate from data | First ball sideout rate model estimate |

|---|---|---|---|---|

| General play | 17535 | 7506 | 0.428 | 0.428 |

| First of set | 481 | 224 | 0.466 | 0.465 |

| Followed timeout | 1244 | 556 | 0.447 | 0.450 |

| Followed technical timeout | 890 | 403 | 0.453 | 0.457 |

Significance of the serve category term: p=0.066

As with earned sideout rate, the differences do not quite reach significance. Nevertheless, first ball sideout rates might be lower in general play than the other three categories.

2.2.1.7 Jump serve rate

In this case a simpler model is used, with no random effect by player, because players tend to be consistent in whether they jump serve or not (which causes problems in the numerical fitting of the model).

| Serve category | Serves | Jump serves | Raw jump serve rate from data | Jump serve rate model estimate |

|---|---|---|---|---|

| General play | 21058 | 11908 | 0.565 | 0.565 |

| First of set | 543 | 312 | 0.575 | 0.575 |

| Followed timeout | 1460 | 817 | 0.560 | 0.560 |

| Followed technical timeout | 1016 | 519 | 0.511 | 0.511 |

Significance of the serve category term: p=0.007

The results suggest that jump serves are less commonly used after technical timeouts. Bear in mind that the starting rotation of each team is relatively consistent from set to set, so the jump serve rate for the first serve of a set is probably most strongly determined by the players starting in position 1 (and whether they prefer jump-serving or not, rather than any psychological or other effect of being the first serve of the set).

2.3 Timeouts over the course of a set

In the previous section we found little evidence to support the idea that overall sideout rate differs after timeout than during general play. Are there more subtle effects — for example, does the effectiveness of a timeout change as a set progresses? Here we are focusing on sideout rate, and only comparing after-timeout serves to all other serves.

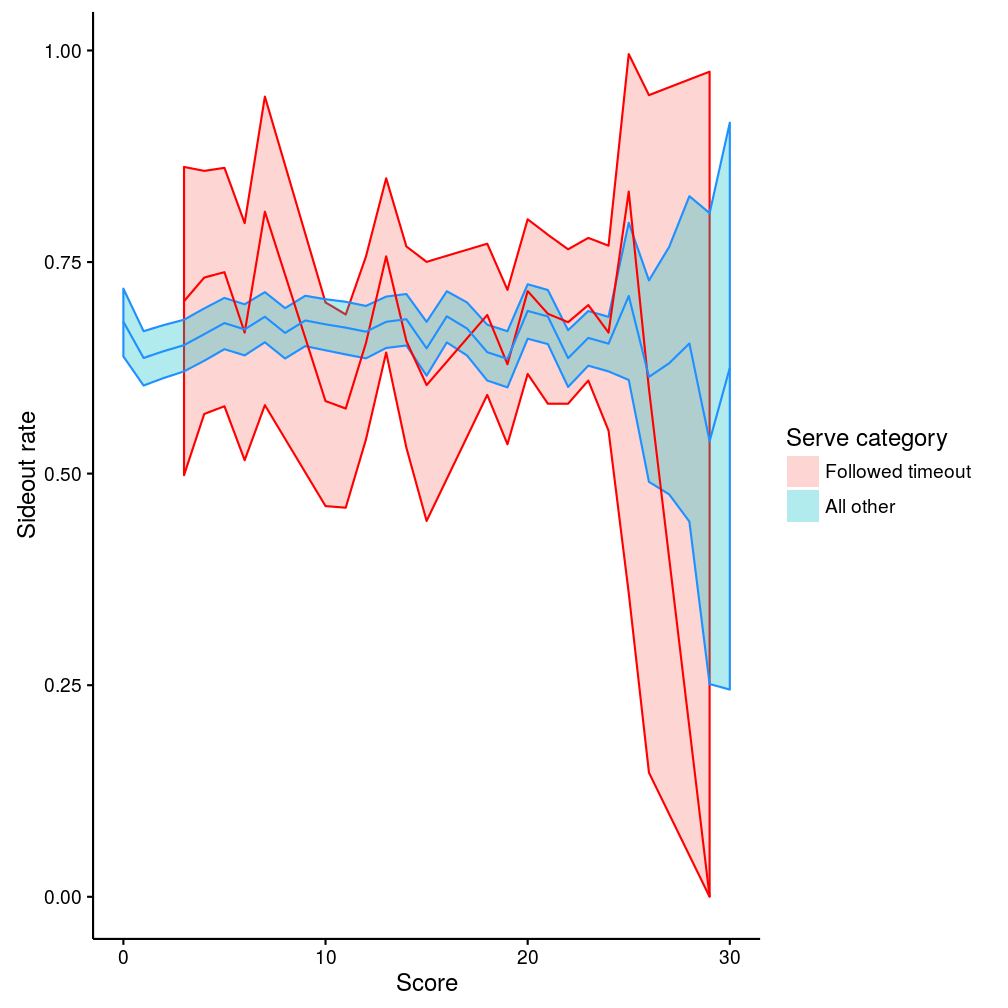

2.3.1 Set score

Does sideout rate vary over the course of a set? The data are summarised below. The lines show the mean sideout rates and the shaded bands their 95% confidence intervals, for different values of “score” (where “score” here means the higher of the two teams’ scores at the beginning of the rally):

Figure 5: Sideout rate by set score

During non-timeout play (blue line) the sideout rate is clearly pretty constant. The after-timeout rate (red line) is very similar to the blue line. The shaded bands are wider, indicating that we are less confident in our estimate (because there are less data for after-timeout serves than for other serves), but there is no substantive difference between the two lines. (The big dip in the red line above 25 points is an artifact from a lack of data in this region.)

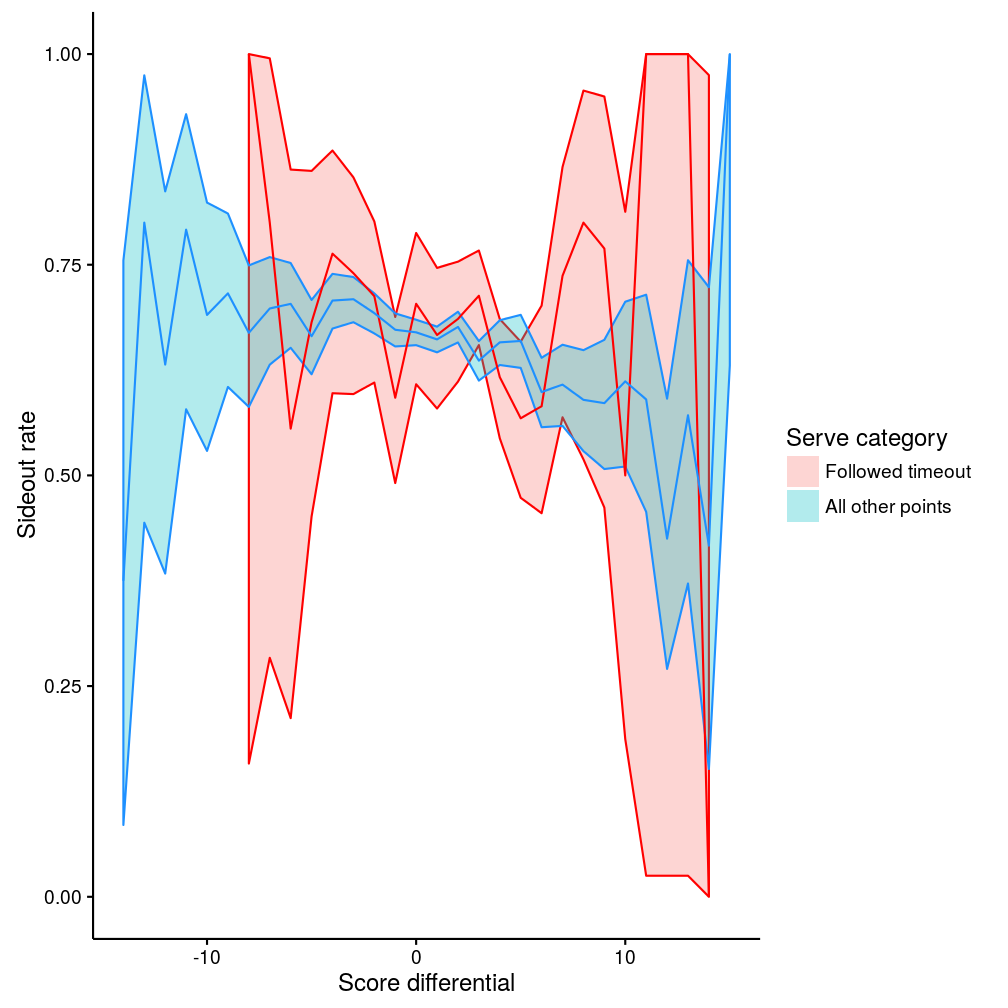

2.3.2 Score differential

What about score differential? Here, differential is defined relative to the serving team (so a positive score differential means that the serving team is ahead). We don’t distinguish which team called a timeout (though as previously shown it’s overwhelmingly the receiving team).

The data are summarised below (the lines show the mean sideout rates and the shaded bands their 95% confidence intervals):

Figure 6: Sideout rate by score differential

During non-timeout points (blue line), there is a clear relationship between sideout rate and score differential, such that sideout rate is higher when the serving team is behind, and sideout rate is lower when the serving team is ahead (exactly as one would expect). The relationship after timeout is not quite so clear. For relatively small score differential (say, -5 to +5 points difference) the timeout line (red) looks largely like the blue line. At more extreme score differentials (below -5 or above +5) the data are less consistent and the confidence intervals much wider, indicating that there isn’t sufficient data to draw strong conclusions. Overall, though, the confidence intervals overlap and so there is little to support the idea that the relationship between sideout rate and score differential is affected by timeouts.

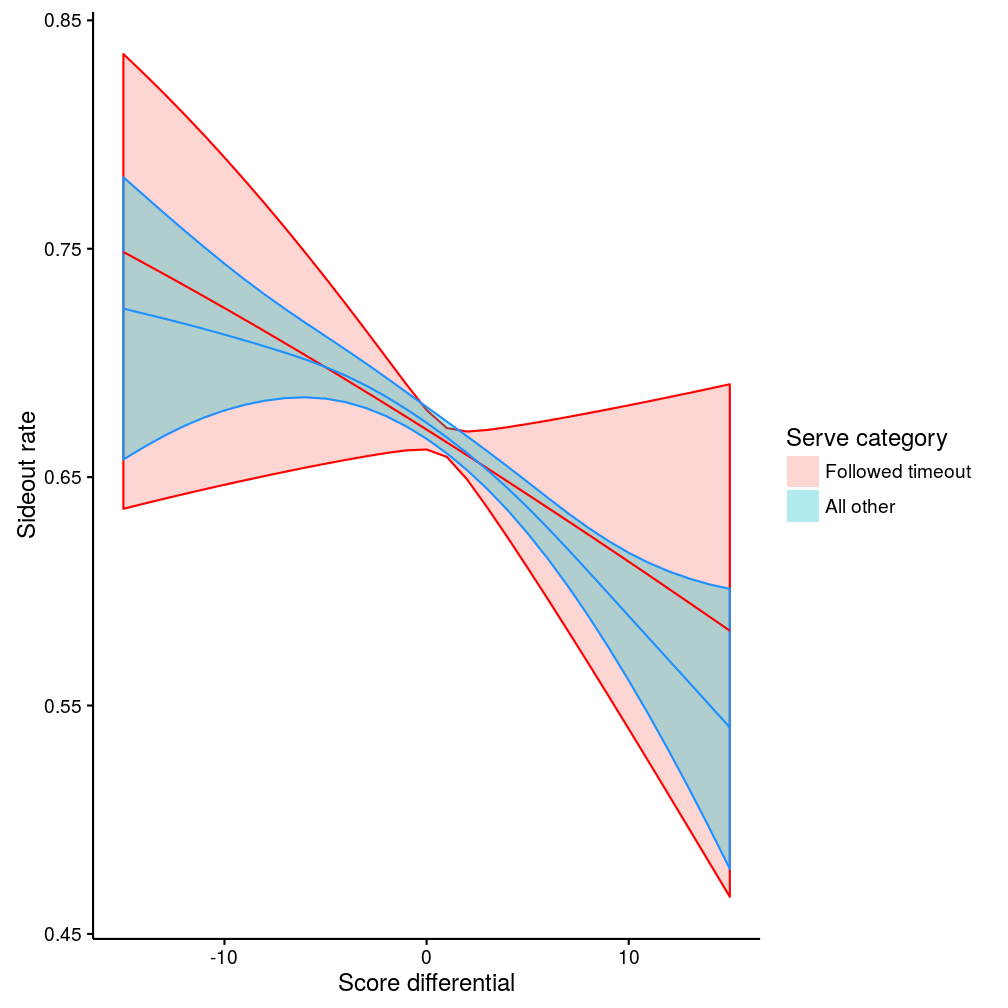

If we are prepared to assume that sideout rate should vary smoothly as a function of score differential, then we can fit a model, and this shows the relationships a little more clearly (a generalised additive model with smoothing spline was used here):

Figure 7: Sideout rate by score differential, from smoothed model

3 Serve runs

First find runs of serves, and tabulate how many runs we have of length 1, 2, 3, etc. The answer can be compared to the answer that we would expect given our overall sideout rate (we know that our overall sideout rate is 67% — so we expect 67% of serve runs to be of length 1. Runs of length two must occur when the first serve is not sided out but the second is, which will happen with frequency (1-0.67)*0.67, or about 22% of serve-runs. And so on):

| Serve run length | Fraction of serve runs | Fraction expected |

|---|---|---|

| 1 | 0.683 | 0.666 |

| 2 | 0.213 | 0.222 |

| 3 | 0.068 | 0.074 |

| 4 | 0.024 | 0.025 |

| 5 | 0.008 | 0.008 |

| 6 | 0.003 | 0.003 |

| 7 | 0.001 | 0.001 |

| 8 | 0.000 | 0.000 |

| 9 | 0.000 | 0.000 |

| 10 | 0.000 | 0.000 |

So the numbers we are extracting from the data are about right. The small differences are at least partly due to the ends of sets (say a set finishes with a run of three serves: we do not count this as a run of three because it didn’t end with a sideout — maybe it would have continued for several more serves had the end of the set not brought it to a premature halt).

Now we can look at serve error and sideout rates according to the positions of serves in their runs (e.g. is a serve error more likely on the first serve in a run compared to the second, for example). We only consider runs up to five serves in length, to keep the output manageable (and as can be seen from the above table, runs greater than five in length represent less than one percent of serve runs). Data summary:

| Team | Run position | Number of serves | Number of serve errors | Serve error rate |

|---|---|---|---|---|

| ALL TEAMS | 1 | 16308 | 2677 | 0.164 |

| ALL TEAMS | 2 | 5178 | 852 | 0.165 |

| ALL TEAMS | 3 | 1694 | 244 | 0.144 |

| ALL TEAMS | 4 | 580 | 91 | 0.157 |

| ALL TEAMS | 5 | 200 | 34 | 0.170 |

We fit a model of serve error as a function of run position, so that we can examine whether serve error varies depending on where the serve is in its run. The model estimates for serve error rate should roughly match the values in the “ALL TEAMS” data summaries above, but will not necessarily match exactly because the model includes a random effect for the individual server (to try and account for variations due to individual servers).

| Run position | Serve error rate model estimate |

|---|---|

| 1 | 0.160 |

| 2 | 0.162 |

| 3 | 0.141 |

| 4 | 0.153 |

| 5 | 0.165 |

Significance of the run position term: p=0.303

There is some variability in serve error rate depending on the run position, but overall run_position is not significant (that is, the model that includes uses a run position term to explain serve error does not fit the data any better than a model without this term).

We can do the same thing for sideout rate as a function of run position:

| Team | Run position | Number of serves | Number of sideouts | Sideout rate |

|---|---|---|---|---|

| ALL TEAMS | 1 | 16308 | 10963 | 0.672 |

| ALL TEAMS | 2 | 5178 | 3422 | 0.661 |

| ALL TEAMS | 3 | 1694 | 1084 | 0.640 |

| ALL TEAMS | 4 | 580 | 374 | 0.645 |

| ALL TEAMS | 5 | 200 | 122 | 0.610 |

| Run position | Sideout rate model estimate |

|---|---|

| 1 | 0.674 |

| 2 | 0.663 |

| 3 | 0.644 |

| 4 | 0.652 |

| 5 | 0.618 |

Significance of the run position term: p=0.038

The run_position term is significant in this model, suggesting that the sideout rate falls during a serve run. That is, once I have reached the third serve of a run, I am actually slightly more likely to win the point (and hence continue the run) than would normally be the case.

3.1 Timeouts

What about timeouts? Does calling a timeout during a run of serves affect the serve error or sideout rate? First of all, we fit the same models as above, but with an additional term for whether or not the serve followed a timeout. That is, the serve error/sideout rate is estimately separately for each run position, along with an additional adjustment for timeout. The timeout adjustment applies equally to all serve run positions.

| Factor level | Serve error rate model estimate (General play) | Serve error rate model estimate (Followed timeout) |

|---|---|---|

| Run position 1 | 0.163 | 0.140 |

| 2 | 0.166 | 0.142 |

| 3 | 0.149 | 0.127 |

| 4 | 0.163 | 0.139 |

| 5 | 0.170 | 0.146 |

Significance of the timeout term: p=0.027

Note that the error rate estimates here (for run position 1–5) are slightly different than in the previous model — because now we are additionally accounting for the effect of timeouts. Serves following timeouts have a significantly lower error rate, just as we found previously.

| Factor level | Sideout rate model estimate (General play) | Sideout rate model estimate (Followed timeout) |

|---|---|---|

| Run position 1 | 0.672 | 0.688 |

| 2 | 0.661 | 0.678 |

| 3 | 0.642 | 0.659 |

| 4 | 0.651 | 0.668 |

| 5 | 0.621 | 0.639 |

Significance of the timeout term: p=0.225

Although the fitted sideout rates are marginally higher after timeout, the uncertainty around this effect is large (hence the timeout effect is not significant — the model isn’t certain that this effect is not zero). We can’t conclude, given the data available, that sideout rate is affected by timeout.

These two models assume that timeouts affect all serves equally. Perhaps we believe that timeouts might affect serve error rate differently depending on the serve run position (for example, we might think that a timeout after one successful serve will not have any effect, but a timeout after a run of two serves might). We can also test this, but this time we need to fit a model that estimates a separate error rate for each run position, both during normal play and after timeout. In this case, rather than try and show the data summary (it is becoming rather complex) we just show the comparison of the two models:

## Data: srunxt

## Models:

## mfit_sretm: error ~ run_position + serve_category + (1 | player_name)

## mfit_sreti: error ~ run_position * serve_category + (1 | player_name)

## Df AIC BIC logLik deviance Chisq Chi Df Pr(>Chisq)

## mfit_sretm 7 19491 19547 -9738 19477

## mfit_sreti 11 19495 19583 -9736 19473 3.79 4 0.44The test indicates that the second model does not provide a significantly better fit to the data — or in other words, that there is no particular evidence in this data that timeouts affect serve error rate differently depending on the run position of the serve.

And for sideout rate:

## Data: srunxt

## Models:

## mfit_srsotm: serve_loss ~ run_position + serve_category + (1 | team)

## mfit_srsoti: serve_loss ~ run_position * serve_category + (1 | team)

## Df AIC BIC logLik deviance Chisq Chi Df Pr(>Chisq)

## mfit_srsotm 7 28537 28593 -14262 28523

## mfit_srsoti 11 28544 28632 -14261 28522 1.19 4 0.88The data don’t support the idea that timeouts have a different effect (on sideout rate this time) depending on where in the serve run they are called.

We can double-check this by eyeballing the data, and by explicitly testing the effect of sideout at each position in the serve run (i.e. fitting a new model for each serve run position, and testing whether timeout has a significant effect). In this case we only compare post-timeout serves to serves during general play (i.e. not using data from serves after technical timeouts or first serve of a set). Compare the “Sideout rate (general play)” and “Sideout rate (followed timeout)” columns in the following table:

| Run position | Number of serves (general play) | Number of sideouts (general play) | Sideout rate (general play) | Number of serves (followed timeout) | Number of sideouts (followed timeout) | Sideout rate (followed timeout) | Significantly different from general play |

|---|---|---|---|---|---|---|---|

| 1 | 15047 | 10090 | 0.671 | 139 | 97 | 0.698 | 0.486 |

| 2 | 4223 | 2788 | 0.660 | 687 | 460 | 0.670 | 0.611 |

| 3 | 1154 | 739 | 0.640 | 439 | 285 | 0.649 | 0.797 |

| 4 | 395 | 251 | 0.635 | 147 | 101 | 0.687 | 0.259 |

| 5 | 152 | 92 | 0.605 | 28 | 19 | 0.679 | 0.459 |

3.2 Calling timeouts during serve runs

When during serve runs are timeouts called? After how many breakpoints (points won on serve)?

| Number of breakpoints | Number of serves | Number of timeouts | Timeout rate |

|---|---|---|---|

| 0 | 16308 | 139 | 0.009 |

| 1 | 5178 | 687 | 0.133 |

| 2 | 1694 | 439 | 0.259 |

| 3 | 580 | 147 | 0.253 |

| 4 | 200 | 28 | 0.140 |

Are multiple timeouts ever called during a single serve run?

Rarely. There were 1407 serve runs in which at least one timeout was called. Two timeouts were called in only 53 of these.